CTO

Overall, our data reveals something crucial about CTOs:

this is the cohort that has gone the deepest, seen the most, and come back with the most specific opinions.

92% of CTOs are more excited about AI than a year ago. That’s the highest of any persona in this survey.

But this isn't the excitement of someone who has watched a demo. CTOs have shipped with these tools. They've debugged the failures, navigated the edge cases, and seen what's on the other side. Their optimism is load-bearing. It comes from evidence.

How has CTOs’ excitement changed toward AI?

When CTOs describe what's blocking them from scaling AI, they don't mention bandwidth or budget. CTOs point directly at the tools: too much manual context required (67%), output quality that isn't production-ready (58%), models that don't learn from feedback (46%). These are capability gaps that the current generation of AI tooling cannot yet reliably fix.

The cohort that has gone much further into AI implementation than anyone else in this survey has a very clear view of what needs to come next. Their wishlist: cheaper inference and fewer hallucinations and MLOps infrastructure that can actually support agents at scale.

TOP 3 BARRIERS TO SCALING AI

92% of engineers use AI to write code, but it’s yet to penetrate deeper in the development lifecycle.

Across six stages of the software development lifecycle, CTOs reveal a clear AI maturity curve that shows exactly where AI has earned engineering trust, and where it hasn’t.

Code Generation sits alone at the top, and adoption is near-universal. 92% of teams use AI for code generation, with 39% at scaled deployment. In 2026, AI-assisted coding is table stakes. Teams not using it are the exception.

The middle of the stack shows momentum without consolidation. Even with strong adoption, Documentation (72%), Debugging (72%), Code Review (67%), and Test Automation (61%) show lower “scaled” rates. Teams have brought AI into these workflows, but it has yet become the default.

DevOps and infrastructure anchor the bottom with just 44% adoption, and only 11% scaled, and the hesitation makes intuitive sense. DevOps requires deep system context, reliability guarantees, and the kind of stateful reasoning that current models handle poorly. It’s also the stage where failures are most visible and most expensive.

This gradient from code generation (92%) to DevOps (44%) is the CTO’s maturity curve for 2026.

36% of CTOs spend $51-100 per developer per month, the largest cohort. But 28% are already above $200/dev/month, while another 28% stay under $50.

There’s no middle ground. Teams either spend modestly or aggressively.

For founders wondering what their CTOs should be budgeting, $51–100 per developer per month is the median reality.

But teams that aspire for AI-assisted development across the entire lifecycle should expect to pay $200+ per user. Those are the teams most likely to have scaled adoption across code generation, review, testing, and debugging simultaneously.

On paper, the value case looks solid. 61% of CTOs report positive ROI, with another 25% still neutral. Only 14% describe AI tooling as expensive or unsustainable.

At first glance, that’s a healthy picture. Most teams believe AI is paying for itself. But scratch beneath the surface, and a tension emerges.

Cost vs. Value Sentiment

The tools deliver real value. But they don’t feel cheap enough for the value they create. That also explains why “lower cost” is the single biggest lever CTOs say would 10× adoption.

For the 86% of CEOs planning to increase AI spend, this is the unit-economics reality. The money is flowing, the ROI is positive, but the cost curve hasn’t bent yet.

The journey from AI proof-of-concept to production deployment is where enthusiasm collides with engineering reality.

For 85% of CTOs at least 10% of their AI POCs make it to production. Nearly half (47%) convert 30-60% of their POCs, and none report zero conversions.

That alone is telling. AI POCs are no longer vaporware or innovation theater. Most deliver real production value. But the drop-off matters just as much. Even in strong teams, 40–70% of POCs never make it to production. Understanding why is critical.

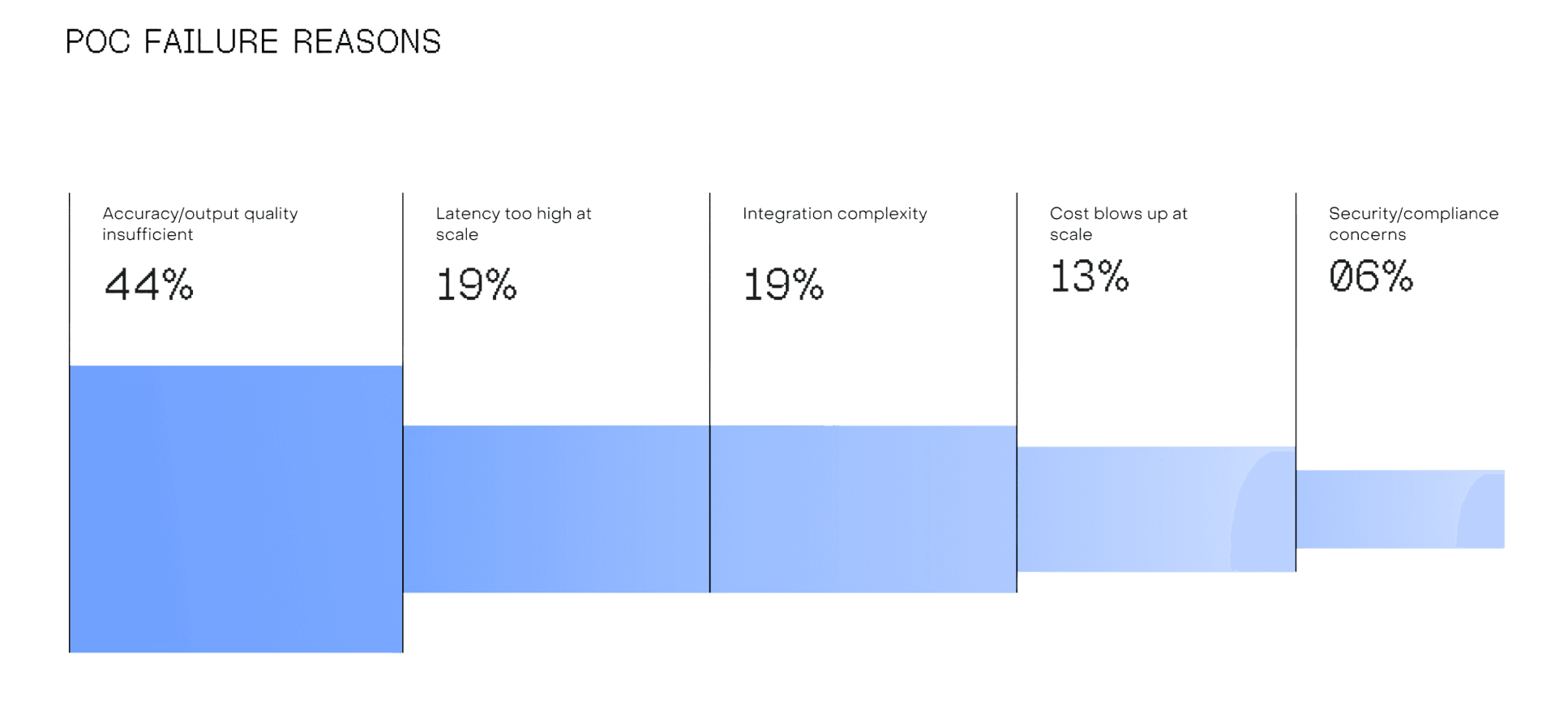

Why AI POCs Die

Among CTOs who’ve seen POCs fail accuracy and output quality are the biggest culprits. They kill 44% of POCs. Latency at scale (19%) and integration complexity (19%) are tied for second.

The accuracy problem deserves emphasis. It’s not that models can’t do the task. It’s that they can’t do it reliably enough for production. The jump from an 80% accurate demo to a 95% reliable production system is where most engineering effort and cost concentrates, and where most POCs stall.

From 27 open-ended responses, the success playbook is remarkably consistent.

Most CTOs are neither building nor buying. They’re calling APIs.

While 35% of founders prefer to build AI in-house and 39% favour hybrid models, CTOs offer an on-the-ground look at the realities of implementation, and they have a different story to share.

33% Buying specific tools

67% Not buying specialised tools

But among the 33% who are, the purchases cluster around developer productivity (Cursor, Claude Code, Coderabbit) and a handful of vertical solutions like Retell (voice agents), Meetgeek (meeting intelligence), and n8n (workflow automation).

A note on data currency:

This data reflects our respondents’ preferences as of January 2026. Given the rapid pace of change and releases in frontier models, adoption patterns have evolved meaningfully. We expect current numbers to look quite different, and encourage you to treat this as a directional snapshot rather than a present-day benchmark.

The building blocks above show robust tool selection with strong preferences emerging. But the governance layer tells a different story.

The top three gaps (testing, debugging, and observability) are all governance-adjacent. CTOs know they’re flying without instruments. But they haven’t found instruments they trust.

Observability: No single observability platform has established itself as the default.

22% LangFuse

58% None

Guardrails: Dedicated guardrail tooling remains the least mature part of the AI stack.

14% Guardrails AI

67% Nonee

The AI stack in Indian startups is built code-first and governance-later. Models are chosen, clouds are provisioned, and orchestration frameworks are wired up. But the monitoring, testing, safety, and evaluation layers that separate a demo from a production system are largely absent.

For founders investing 86% more in AI this governance deficit represents both the biggest operational risk and the clearest opportunity for tooling companies to build into.

CTOs are simultaneously reducing general engineering roles and adding AI specialists.

The net headcount may be shrinking, but the composition is shifting. The team of 2027 will look different from the team of 2024. It will have fewer generalists and more builders fluent in production AI like prompting, evaluation, model orchestration, and reliability.

HOW WILL AI IMPACT ENGINEERING HIRING?

Reducing/freezing engineering hiring

This explains why 43% of CTOs still flag a “talent shortage.” They’re not short on engineers. They’re short on engineers with AI-specific skills. The talent gap is about capability more than the headcount.

The contrast with the CEO is sharp and worth noting. CEOs see the macro outcome: we need fewer people. CTOs see the execution reality: we need different people. Both are right. The workforce is thinning, and the required skill set is evolving. The result is a talent reshuffle, not a reduction.