CPO

AI adoption across the PM value chain follows a striking gradient—one that mirrors the CTO's SDLC curve but with a distinctly product-flavoured twist

For analytical decision-shaping tasks like A/B test analysis and feature prioritisation adoption falls to just 25%. The further a task moves from “make something” toward “decide something” the less PMs turn to AI.

But as models mature and agentic tooling becomes more tightly woven into PM workflows, the judgment gap may narrow faster than expected. Given the pace at which AI capabilities have evolved, it would be worth watching whether analytical tasks move up the adoption curve in the next 12 months.

PMs have embraced AI where the cost of a mediocre output is as little as a revision. They haven't embraced it where the cost is a wrong decision.

This gap reflects a fundamental difference in what PMs are willing to delegate. Generating a first draft of a PRD is low-risk; a human will refine it. But deciding which feature to build next, or interpreting what an A/B test result means for the roadmap, carries real product consequences.

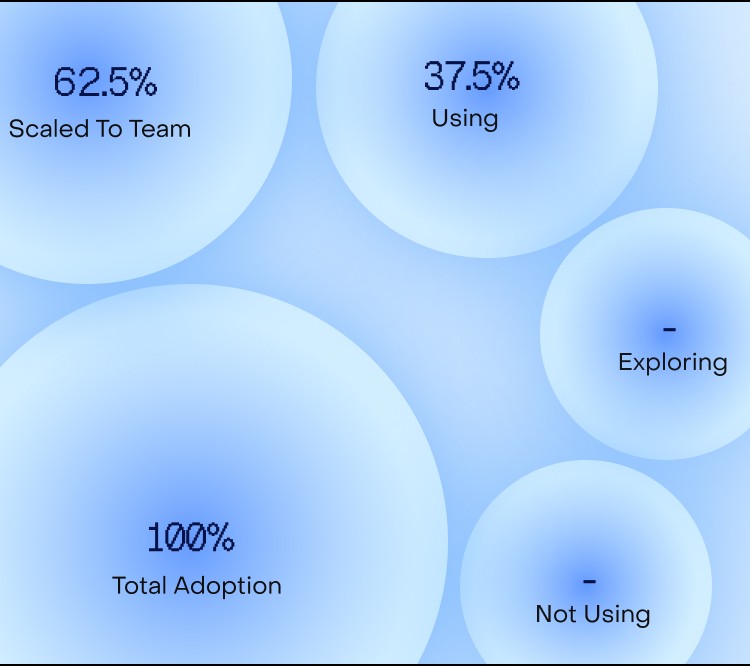

AI has dramatically accelerated the creative half of the PM workflow. 100% of CPOs report a reduction in time from idea to prototype with 62.5% reporting a reduction of more than 50%.

This confirms a broader survey finding: 45% of founders cite “faster experimentation” as their most unexpected AI benefit. The CPO data provides the product-specific proof point. The experimentation speed-up is real - it’s dramatic and it’s being felt most acutely in the prototyping phase.

And the compounding effect matters. When a product team can turn an idea into a testable prototype in a fraction of the time it used to take, the entire development cadence shifts. More ideas get tested. Bad ideas die faster. Good ideas reach users sooner. For product teams speed is more than just a benefit of AI adoption; it's become the primary driver of it.

Across four categories of PM tools (PRDs, prototyping, design, and user research), a small number of tools dominate, leaving a major gap.

Figma Make leads design at 75%. But practitioners are candid: a significant portion of Figma Make's output needs heavy rework before it's usable. It's a starting point, not a finishing line.

For everything else, CPOs are reaching for Claude. It dominates PRDs with a 50% share and anchors prototyping workflows alongside ChatGPT. Most CPOs now build functional prototypes through dialogue rather than a canvas.

Figma Make

Design

UI design, component generation, design iteration.

Claude

PRDs + Prototyping

Writing specs, conversational prototyping, code generation.

ChatGPT

Prototyping

Rapid ideation, interface mocking, dialogue-based prototyping.

Cursor

Prototyping + Dev

Vibe coding, pushing designer-built code to production.

Lovable

Prototyping

No-code/low-code app prototyping, PM-led concept building

Granola

User Research

Meeting notes, transcript capture, session summaries

Dovetail

User Research

Interview synthesis, affinity mapping, insight

extraction

Obsidian + Claude

Knowledge Management

Second brain, context management, personal AI workflows

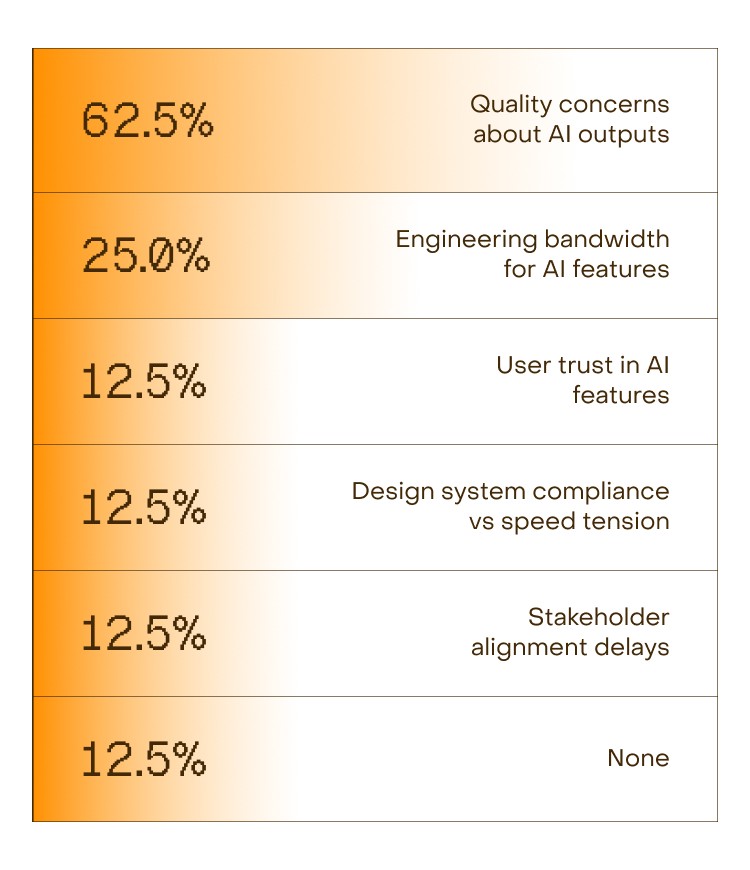

When asked about the biggest challenges in adopting AI for product work, one answer towers above the rest

CPOs are believers, but measured ones. 71% report being more excited about AI than a year ago, a strong signal, though slightly more tempered than the 83% ecosystem average. The gap comes from their proximity to the user. CPOs have seen enough of what AI can and can't do in a product context to be enthusiastic without being evangelical.

That measured confidence shapes how they're building.

When asked how product teams are formalising AI adoption, the most common approaches are documentation and individual tool budgets. One captures what works, the other gives PMs permission to find it. These teams are also actively trying to spread what's working rather than leaving it to individual discovery through workshops, hackathons, and dedicated AI champions.

But the ceiling, when it comes, isn't cultural or organisational. Tool maturity and output quality register as scaling barriers at 86%, the highest of any persona in this survey. CPOs are waiting for the tools to be good enough to trust at scale, which is exactly the kind of bar you'd expect from the person who ships what users actually experience.

Top Barriers to Scaling